Threat feeds are everywhere. They're cheap- they're plentiful and they're here to HELP YOU BLOCK THE ENTIRE INTERNET.

The hardest part isn't getting the data, it's making sure there's something between IT and your NETWORK. Something that helps you leverage the data AND rest easy at night knowing that your not blocking 0.0.0.0/0. In earlier versions of CIF, we accidentally helped some friends block things like netflix.com, facebook.com and 1.1.1.1/1 (can you guess that that little gem does to a network?). That last one didn't even start out as an ip address, it was a phishing url: hxxp://1.1.1.1/1. Turns out a simple regex bug wasn't anchoring correctly, skimmed out the IP and netmask only to dump the result in a highly confident IP block feed (sorry).

We've learned over the years by doing. What to filter, what not to filter and how to draw those lines depending on the feed you're working with. For a list of ssh scanners, you may not want to filter anything. If you're implementing this feed correctly, you're SYN checking INBOUND traffic and it should almost NEVER fire a false positive. We see SSH scanners inbound from netblocks owned or near things like qq[.]com, jd[.]com and Alibaba networks all the time. You shouldn't be necessarily blocking outbound traffic to those sites, but you should definitely be blocking inbound tcp/22 SYNs.

In a previous engagement- we hosted "common threat feeds" for a large number of universities in the US. This effort posed a number of technological and political challenges in itself- the least of which was "does a single whitelist work for all consumers". The short answer, is no. You'll quickly learn that the more advanced consumers will want you to filter LESS.

Advanced CSIRTs tend to have their own filters already in place and actually VALUE that squishy medium-confidence data. They understand how to apply it effectively and it usually helps them with the hard-to-detect stuff. The less advanced CSIRTs will typically want the same- except they usually don't have their own filtering in place. They tend to take-all-the-data and apply-it-liberally-to-all-the-things. What we've learned over the years is to actually hide things by default, provide a few bread crumbs that help them "learn as they go".

You protect them [from themselves] by giving them a list of C2's, giving them time to wrap their heads around the problem space a bit. Understand how to properly apply things like SYN filters, build up a false-positive process and gain trust in the process. When they figure out you've been holding out on them- they'll be annoyed, but that's how you'll know they're ready. If they never get upset of the lack of data access, you'll know they're not quite there yet. It's a constant engagement helping to grow them as a team, while you're using tools to teach.

https://github.com/csirtgadgets/ip-filter

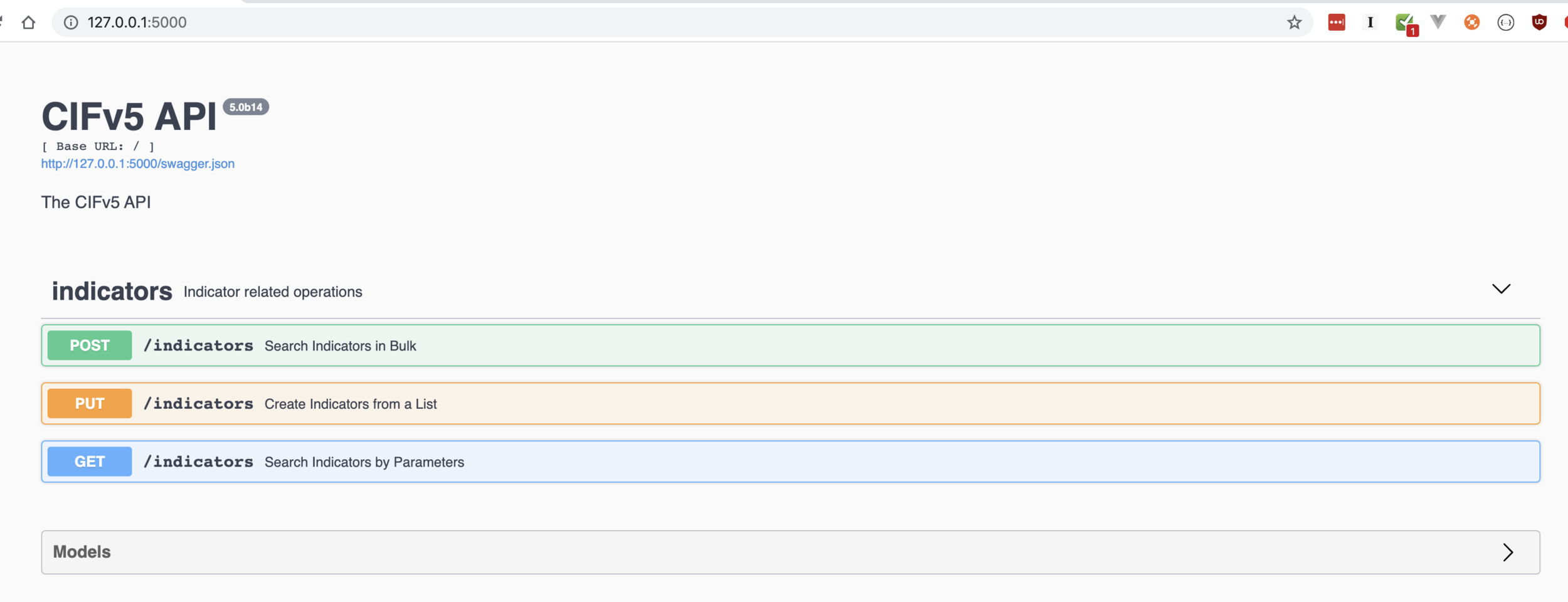

Over the years we've tried to bake this technology right into the indicator framework. This makes CIF one of the most adaptive threat intelligence technologies in the space. It also makes you have to use it (or copy paste pieces of it) to leverage it. As i've been thinking through CIFv5 i've started abstracting a lot of the "things that makes CIF, CIF" out into more useful utilities you can embed into your own frameworks.

It's not that it's hard- or even magical, it's that most security operators really don't know what they don't know. They use things like regex, lists, hashes or shell scripts to try and (hope and pray) that a FP doesn't make it's way in. This ends up being the difference between growing your own, robust threat intelligence platform, and "just buying something off the shelf". Most of those providers are also afraid of letting you get 'a false positive', well because- you probably won't stop paying them for things they miss.

That, in and of itself is ALSO a risk.